Cybersecurity Research

Breaking the AI Trilemma

Resources: Paper and Presentation Sent with Transcript

Project Overview

This project examined how privacy-preserving machine learning systems behave under adversarial data poisoning, with an emphasis on explainability as a diagnostic tool. I focused on federated learning and differential privacy, where privacy constraints often obscure model failure modes. By integrating explainable AI into these settings, I studied how subtle data manipulation can undermine trust without obvious performance collapse. The goal was to understand how design choices affect reliability in security-critical systems rather than to optimize accuracy alone.

Methods and Work

Conducted research under Dr. Angela Newell (UT Austin) on privacy-preserving machine learning models, focusing on federated learning and differential privacy

Implemented explainability techniques to test model reasoning and robustness under adversarial data poisoning

Analyzed how poisoning attacks degrade interpretability and delay failure detection in high-stakes models

Interviewed cybersecurity specialists at AWS and Microsoft

Authored a research paper detailing findings, currently under review with Springer Nature Computer Science

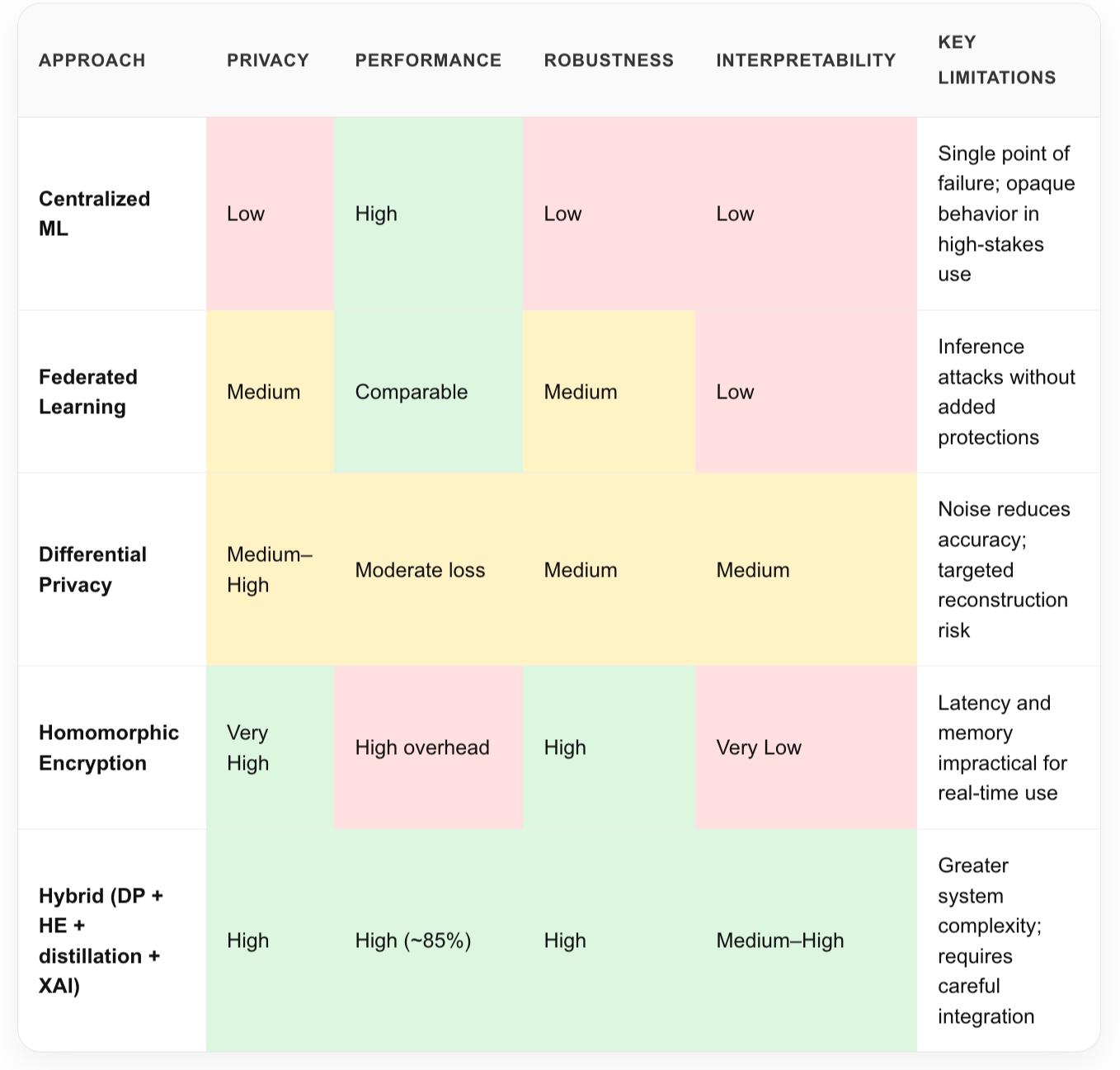

Comparative Evaluation of Different Privacy-Preserving ML Techniques

Comparative evaluation of privacy, robustness, interpretability, and efficiency across dominant ML approaches.

Key Findings

Failure in high-stakes ML systems often emerges at the system level, not in aggregate accuracy.

Under adversarial data poisoning, model reasoning and feature attributions degraded well before conventional performance metrics signaled failure, allowing attacks to persist undetected. This exposes a critical limitation of isolated, metric-driven evaluation in security-critical environments.Trustworthy AI in cybersecurity requires architectural coordination, as isolated techniques leave exploitable gaps.

No single method can simultaneously satisfy privacy, robustness, explainability, and deployability under real-world constraints. Systems that proved most resilient combined multiple mechanisms, deliberately layered according to data sensitivity, operational context, and governance requirements.Tiered privacy enables both protection and operational oversight.

Differential privacy preserved low-latency operation for medium-sensitivity features, while selective homomorphic encryption protected critical identifiers. Aligning protection strength with data sensitivity reduced computational overhead while avoiding blind spots introduced by uniform privacy mechanisms.Robustness and efficiency must be co-designed.

Adversarial defenses improved security but increased model complexity, often delaying failure detection in deployed systems. Integrating compression and distillation was essential to preserve responsiveness in critical infrastructure such as power grids and healthcare systems.Explainability should function as a diagnostic instead of a justification.

Embedding explainability into the system architecture enabled earlier detection of adversarial manipulation and supported effective human oversight under privacy constraints. Post-hoc explanations alone proved insufficient in encrypted or federated pipelines, because they obscure the layered reasoning behind micro-decisions.Key constraints remain.

Resource limitations on edge infrastructure, including grid sensors and medical systems, impose extremely high energy costs. Rising uncertainty around long-term cryptographic viability under quantum threat models, combined with the absence of standardized governance for secure enclaves, continues to limit large-scale deployment.

Reflection

This work reshaped how I think about security in machine learning. I learned that robustness is not only a matter of performance metrics, but of whether uncertainty and failure can be detected before harm occurs. Explainability became less about interpreting outputs and more about making risk visible in systems that people cannot opt out of.